Driving in the Snow is a Team Effort for AI Sensors

No one likes driving in a blizzard, such as autonomous autos. To make self-driving

cars safer on snowy roadways, engineers search at the trouble from the car’s point of check out.

A major challenge for totally autonomous autos is navigating poor climate. Snow particularly

confounds critical sensor data that allows a motor vehicle gauge depth, uncover obstacles and

maintain on the right facet of the yellow line, assuming it is seen. Averaging extra

than two hundred inches of snow each individual winter season, Michigan’s Keweenaw Peninsula is the best

put to drive autonomous motor vehicle tech to its limitations. In two papers offered at SPIE Defense + Business Sensing 2021, researchers from Michigan Technological College explore solutions for snowy driving situations that could assistance bring self-driving solutions to snowy towns like Chicago, Detroit,

Minneapolis and Toronto.

Just like the climate at moments, autonomy is not a sunny or snowy sure-no designation.

Autonomous autos cover a spectrum of concentrations, from cars previously on the market place with blind spot warnings or braking help,

to autos that can switch in and out of self-driving modes, to other individuals that can navigate

entirely on their individual. Important automakers and analysis universities are nevertheless tweaking

self-driving technological innovation and algorithms. At times mishaps manifest, either due to

a misjudgment by the car’s synthetic intelligence (AI) or a human driver’s misuse

of self-driving characteristics.

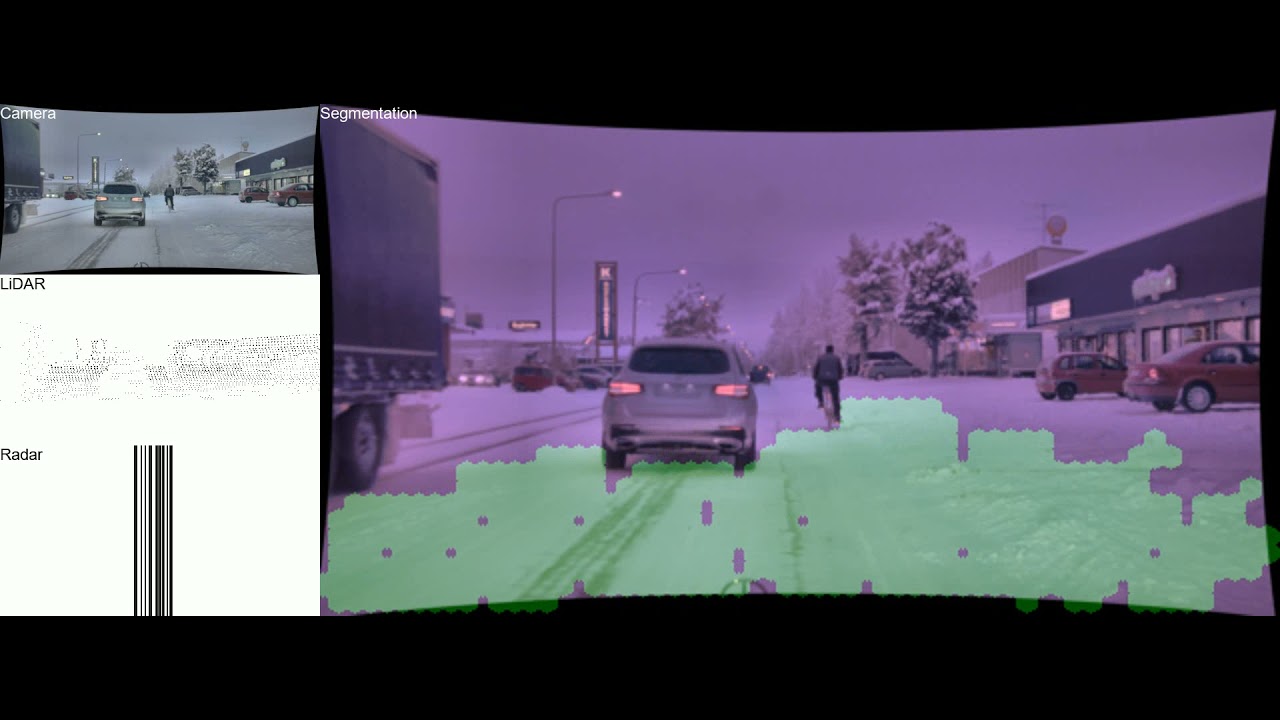

Enjoy Drivable route detection using CNN sensor fusion for autonomous driving in the snow movie

Drivable route detection using CNN sensor fusion for autonomous driving in the snow

A companion movie to the SPIE analysis from Rawashdeh’s lab exhibits how the synthetic

intelligence (AI) community segments the picture area into drivable (environmentally friendly) and non-drivable.

The AI procedures — and fuses — each sensor’s data irrespective of the snowy roadways and seemingly

random tire tracks, even though also accounting for crossing and oncoming visitors.

Sensor Fusion

Human beings have sensors, too: our scanning eyes, our sense of harmony and motion, and

the processing energy of our mind assistance us understand our setting. These seemingly

simple inputs allow for us to push in almost each individual circumstance, even if it is new to us,

mainly because human brains are great at generalizing novel activities. In autonomous autos,

two cameras mounted on gimbals scan and perceive depth using stereo eyesight to mimic

human eyesight, even though harmony and motion can be gauged using an inertial measurement

unit. But, desktops can only react to situations they have encountered just before or been

programmed to recognize.

Considering that synthetic brains are not all over still, endeavor-precise AI algorithms should acquire the

wheel — which implies autonomous autos should rely on several sensors. Fisheye cameras

widen the check out even though other cameras act a lot like the human eye. Infrared picks up

heat signatures. Radar can see as a result of the fog and rain. Light-weight detection and ranging

(lidar) pierces as a result of the dim and weaves a neon tapestry of laser beam threads.

“Every sensor has limits, and each individual sensor covers yet another one’s back again,” mentioned Nathir Rawashdeh, assistant professor of computing in Michigan Tech’s Higher education of Computing and one particular of the study’s direct researchers. He operates on bringing the sensors’ data alongside one another

as a result of an AI method named sensor fusion.

“Sensor fusion makes use of several sensors of different modalities to understand a scene,”

he mentioned. “You can not exhaustively program for each individual element when the inputs have challenging

designs. That is why we have to have AI.”

Rawashdeh’s Michigan Tech collaborators incorporate Nader Abu-Alrub, his doctoral university student

in electrical and personal computer engineering, and Jeremy Bos, assistant professor of electrical and personal computer engineering, alongside with master’s

degree learners and graduates from Bos’s lab: Akhil Kurup, Derek Chopp and Zach Jeffries.

Bos explains that lidar, infrared and other sensors on their individual are like the hammer

in an outdated adage. “‘To a hammer, every thing looks like a nail,’” quoted Bos. “Well,

if you have a screwdriver and a rivet gun, then you have extra solutions.”

Snow, Deer and Elephants

Most autonomous sensors and self-driving algorithms are being developed in sunny,

very clear landscapes. Understanding that the rest of the earth is not like Arizona or southern

California, Bos’s lab commenced accumulating neighborhood data in a Michigan Tech autonomous motor vehicle

(safely driven by a human) all through hefty snowfall. Rawashdeh’s crew, notably Abu-Alrub,

poured in excess of extra than one,000 frames of lidar, radar and picture data from snowy roadways

in Germany and Norway to start out instructing their AI program what snow looks like and

how to see earlier it.

“All snow is not established equal,” Bos mentioned, pointing out that the range of snow makes

sensor detection a challenge. Rawashdeh extra that pre-processing the data and guaranteeing

exact labeling is an crucial action to make sure accuracy and security: “AI is like

a chef — if you have great substances, there will be an superb food,” he mentioned.

“Give the AI mastering community dirty sensor data and you are going to get a poor consequence.”

Low-good quality data is one particular trouble and so is true filth. Substantially like street grime, snow

buildup on the sensors is a solvable but bothersome difficulty. After the check out is very clear,

autonomous motor vehicle sensors are nevertheless not usually in arrangement about detecting obstacles.

Bos pointed out a wonderful example of exploring a deer even though cleaning up regionally collected

data. Lidar mentioned that blob was very little (thirty% possibility of an impediment), the digital camera noticed

it like a sleepy human at the wheel (50% possibility), and the infrared sensor shouted

WHOA (ninety% absolutely sure that is a deer).

Having the sensors and their risk assessments to converse and discover from each other is

like the Indian parable of three blind guys who uncover an elephant: each touches a different

portion of the elephant — the creature’s ear, trunk and leg — and arrives to a different

conclusion about what kind of animal it is. Working with sensor fusion, Rawashdeh and Bos

want autonomous sensors to collectively figure out the respond to — be it elephant, deer

or snowbank. As Bos puts it, “Rather than strictly voting, by using sensor fusion

we will appear up with a new estimate.”

Even though navigating a Keweenaw blizzard is a strategies out for autonomous autos, their

sensors can get much better at mastering about poor climate and, with innovations like sensor

fusion, will be ready to push safely on snowy roadways one particular day.

Michigan Technological College is a general public analysis college, home to extra than

seven,000 learners from fifty four countries. Launched in 1885, the College presents extra than

one hundred twenty undergraduate and graduate degree systems in science and technological innovation, engineering,

forestry, business and economics, health professions, humanities, arithmetic, and

social sciences. Our campus in Michigan’s Higher Peninsula overlooks the Keweenaw Waterway

and is just a handful of miles from Lake Top-quality.